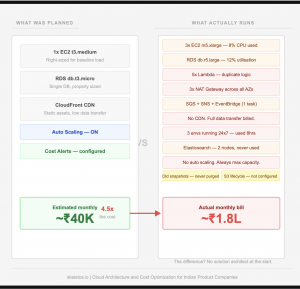

Every few months, somewhere in the Indian startup and product company ecosystem, a finance team opens the AWS billing dashboard and has a quiet panic.

The bill has doubled. Or tripled. Sometimes more. And the engineering team, when asked, cannot immediately explain why.

This is not rare. It is one of the most common and most avoidable problems in software product development. And the part that stings is that it is almost never AWS that is the problem. AWS is doing exactly what it was asked to do. The problem is what it was asked to do.

| High AWS bills are almost always a human problem. Someone made architectural decisions without thinking through the cost implications. Someone did not plan for scale. Someone picked the wrong service for the job. AWS just billed for it faithfully. |

Here is what actually causes it, and what actually fixes it.

The real reason AWS bills spiral out of control

Let us go through the most common culprits. These are not edge cases. These show up in product companies at every stage, from seed-funded startups to established SaaS businesses.

- Running high-end compute for low-compute workloads. This is probably the single most wasteful pattern. A product company spins up a large EC2 instance because they are not sure what size they need, or because the developer is used to having headroom. The workload uses 8 percent of the CPU most of the time. The other 92 percent is running idle and being billed at the full rate. There are right-sized instances for every workload. Most teams never revisit the sizing after launch.

- Over-engineering with too many services. AWS has hundreds of services. Some teams, especially those that have come from enterprise backgrounds or read a lot of architecture blogs, build systems that use far more of them than the product actually needs. A simple data pipeline gets four Lambda functions, an SQS queue, an EventBridge rule, an SNS topic, and a DynamoDB table, when a single well-written service on a small instance would have handled it at a tenth of the cost. Complexity is not sophistication. Most of the time, it is just a higher bill.

- Services that could be consolidated are running separately. Multiple RDS instances when one with proper database separation would do. Multiple NAT Gateways in different availability zones when the traffic pattern does not justify it. Separate environments that are running 24 hours a day when they are only used for 8 hours. These things compound. Each individual decision seems reasonable. Together, they add hundreds of dollars a month.

- Data transfer costs nobody planned for. This is the one that surprises people most. AWS charges for data leaving its network. Data going from EC2 to the internet. Data is going between regions. Data going from S3 to your application. If your product serves media, large files, or even just a lot of API responses, data transfer costs can become the largest line item on the bill. And most teams only discover this after the bill arrives.

- No auto-scaling, just always-on over-provisioning. Traffic is not constant. Most products have peak hours and quiet hours. Running the same capacity at 2 am as at 2 pm costs twice what it should. Proper auto-scaling configures a minimum capacity for quiet periods and scales up only when traffic demands it. Without it, you are paying for capacity you are not using for a significant part of every day.

| The honest summary: most AWS cost problems are not bugs in the billing system. They are the natural outcome of infrastructure that was built for convenience and speed, not cost efficiency. The decisions that create them are made at the start of a project when nobody is watching the bill yet. |

Why startups and product companies keep making the same mistakes

The pattern is consistent enough that it is worth naming directly.

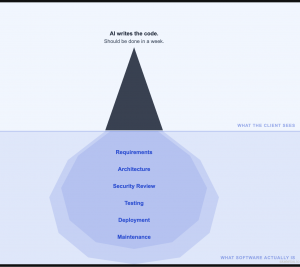

When a product company starts building, the priority is speed. Get to MVP. Launch. Iterate. Cost is a secondary concern because the team is small, the traffic is low, and the bill is manageable.

So nobody brings in a senior solution architect. Nobody maps the expected user load against the infrastructure plan. Nobody asks what happens to the bill when the product gets traction and traffic multiplies.

The developers who build the initial infrastructure are often good engineers. But solution architecture and cost optimization are specific skills that not every developer has. Building something that works is different from building something that works efficiently at scale. The first skill is taught in boot camps and tutorials. The second is learned over years of designing and running systems at real scale.

| Skipping the architect at the start is almost always more expensive than hiring one. You just do not find out until the bill arrives. |

The other reason this keeps happening is that nobody checks. Once infrastructure is running and the product is live, it becomes background noise. Engineers are focused on features. The bill gets paid every month. Nobody sits down with the architecture diagram and the cost report and asks: are we spending this money well?

By the time someone does ask, the architecture has grown organically for a year or two. Changing it is harder. The cost of the original decisions has compounded.

The CDN problem that costs more than people realise

Content Delivery Networks deserve a section of their own because the cost of not using one is frequently invisible until it is very visible.

When a user anywhere in India, or anywhere in the world, requests content from your application, that request travels to your AWS servers, the response travels back, and AWS charges you for that data transfer. Every image. Every video. Every large API response. Every file download.

A CDN caches that content at locations geographically close to your users. When someone in Mumbai requests an image that is cached in a Mumbai edge location, the data does not travel to your AWS origin server at all. The CDN serves it directly. AWS does not charge you for data transfer from the CDN cache the same way it charges for data leaving your EC2 instances or S3 buckets.

For most product companies, especially those serving media, dashboard data, or any kind of static content, a CDN reduces data transfer costs significantly. It also makes the product faster for users, which is a separate benefit. The two improvements together, lower bill and better performance, for a relatively small configuration investment.

Most startups do not configure a CDN in the initial build because it feels like an optimisation for later. Later comes when the bill does.

Minimum and maximum concurrent users — the conversation that never happens

Here is a planning conversation that almost never happens in product development but absolutely should.

What is the minimum number of concurrent users your infrastructure needs to support right now? What is the realistic maximum in the next 12 months? And what does that actually mean for the size and type of infrastructure you need?

Most teams answer the minimum question by accident when they pick an instance size. They answer the maximum number of questions not at all, and then scramble when a marketing campaign or press mention sends unexpected traffic.

Designing for concurrent users is not complicated. It requires understanding your application’s resource consumption per request, setting minimum capacity to handle baseline traffic, configuring auto-scaling to handle peaks, and testing whether that auto-scaling actually works before it is needed.

Without this planning, two things happen. Either the system is over-provisioned for baseline traffic, or you overpay constantly. Or it is under-provisioned for peaks, and users experience degraded performance exactly when it matters most, when lots of people are trying your product.

Both outcomes are avoidable with a few hours of proper architecture planning at the start. Neither is avoidable without it.

The phase-wise approach that actually works

The right way to think about cloud infrastructure for a product company is not: what does our architecture need to look like when we have 100,000 users? It is: what do we need for our first 1,000 users, and how do we design it so that scaling to 10,000 and then 100,000 does not require us to rebuild everything from scratch?

This is what a good solution architect plans for. Phase-wise architecture.

- Phase 1 — Launch. Minimum viable infrastructure. Right-sized compute for your actual expected load. Single region. Simple architecture. Managed services where they genuinely save operational effort. CDN configured from day one. Costs should be modest and predictable. The goal is to serve your first users reliably without overspending on capacity you do not have users for yet.

- Phase 2 — Growth. Auto-scaling configured and tested. Database read replicas if read traffic justifies it. Monitoring is set up so you can see what the system is actually doing under real load. Cost alerts are configured so you know before the bill surprises you. The goal is to handle growth without manual intervention and without paying for idle capacity.

- Phase 3 — Scale. Multi-region if your users and regulatory requirements justify it. Service decomposition if specific components are bottlenecks. Advanced caching. Reserved instances or savings plans for components where the baseline is now stable and predictable. The goal is efficiency at scale, not just survival at scale.

Most product companies try to build Phase 3 infrastructure on day one. They pay for it from day one. And they spend the next two years debugging complexity they did not need yet.

What a right-fit solution architect actually does for you

There is a version of solution architecture that produces impressive diagrams and complex systems. That is not what a product company at an early or growth stage needs.

What you need is someone who can look at what you are building, understand your user profile and growth trajectory, and design the simplest infrastructure that reliably serves your users today while being genuinely extensible for tomorrow.

Specifically, that person should be doing these things at the start of your project:

- Defining your compute requirements based on actual load estimates, not assumptions. This means understanding how many concurrent users you expect, what each request actually costs in CPU and memory, and right-sizing your instances from that calculation rather than from gut feel.

- Mapping your data transfer flows before you build them. Understanding which parts of your system move data, how much, and to where. Designing to minimise expensive data transfer from the start, including configuring CDN for the content types that benefit from it.

- Building in cost monitoring from day one. AWS Cost Explorer, billing alerts, and tagging resources so you know what each part of your system costs. This sounds boring. It is the difference between a surprise bill and a managed infrastructure.

- Planning the scaling path, not just the launch path. Designing auto-scaling groups, load balancer configurations, and database connections in a way that handles 10x your current traffic without manual intervention at 2 am.

- Recommending the right services, not the most services. Often, the most expensive AWS architectures are the ones with the most services. A good architect knows when a managed service genuinely saves cost and operational burden, and when a simpler approach on a modest instance does the job better and cheaper.

The cost of not doing this

Let us be specific about what is at stake.

Companies that build without proper architecture planning typically spend 40 to 60 percent more on cloud infrastructure than they need to. That is not an exaggeration. That number comes from actual cloud cost optimisation projects where teams go in, audit what is running, and find redundant services, idle capacity, missing CDN configurations, and wrong-sized instances throughout.

For a startup spending 3 to 5 lakh rupees per month on AWS, 40 percent waste is 1.2 to 2 lakh rupees per month. Over a year, that is 14 to 24 lakh rupees. Enough to hire a developer. Enough to fund a significant product feature. Gone to infrastructure that was never necessary.

And this is before accounting for the engineering time spent firefighting infrastructure problems that proper architecture would have prevented, or the user experience damage from a system that falls over under traffic because nobody tested it against real concurrent load.

| The solution architect you did not hire at the start costs you more every month in AWS bills than their consulting fee would have been. |

What to do if you are already in this situation

If your AWS bill is already high and you suspect it should not be, the first step is a proper audit. Not a five-minute look at the Cost Explorer dashboard. A real examination of what is running, why it is running, what it is doing, and whether there is a cheaper way to do the same thing.

The audit usually reveals three categories of savings:

- Immediate. Turn off or downsize things that are clearly over-provisioned or unused. Old snapshots. Idle environments. Instances running 24 hours a day that are only used during business hours. These savings are available immediately and require no architecture changes.

- Short term. Reconfigure services that are doing the right job in the wrong way. Add CDN where it is missing. Set up auto-scaling where instances are currently static. Split or consolidate services where the current split is costing more than it should. These take weeks, not months.

- Medium term. Rearchitect components where the original design was wrong for the workload. This is the harder work and the bigger saving. It requires planning, testing, and careful migration. But it produces infrastructure that scales predictably and costs what it should.

None of this requires moving away from AWS. AWS is genuinely a good platform. The services are reliable, the documentation is excellent, and the ecosystem is mature. The problem is almost never the platform. The problem is how it was set up.

One final thought

Cloud infrastructure is not an afterthought. It is not something you figure out later. It is a core part of how your product works and a significant line in your cost structure.

The companies that treat it as such from the beginning, that bring in the right expertise early, that plan for cost as deliberately as they plan for features, end up with infrastructure that grows with them and costs what it should.

The companies that treat it as a detail to sort out later end up with infrastructure that fights them and bills that tell the story of every shortcut that was taken.

The choice is not between AWS and something cheaper. The choice is between planning and not planning. Planning is always cheaper.

At Skeletos IT Service LLP, we help product companies and startups design cloud infrastructure that is right-sized from the start, and audit and optimise infrastructure that has grown beyond what it should cost. If your AWS bill does not feel right, reach out. We will tell you honestly what we find.

#AWS #CloudCost #SolutionArchitecture #Startup #ProductDevelopment #CloudOptimization #India #SkeletosIT